Everything You Need to Know About the Dual-, Triple-, and Quad-Channel Memory Architectures

Intro

Contents

The system’s RAM (Random Access Memory) prevents the PC from achieving its maximum capable performance. This occurs because the processor (CPU) is faster than the RAM, and usually it has to wait for the RAM to deliver data. During this wait time the CPU is idle, doing nothing (that’s not entirely true, but it fits our explanation). In a perfect computer, the RAM would be as fast as the CPU. Dual-, triple-, and quad-channel are techniques used to double, triple, or quadruple the communication speed between the memory controller and the RAM, thus increasing the system performance. In this tutorial, we will explain everything you need to know about these technologies: how they work, how to set them up, how to calculate transfer speeds, and more.

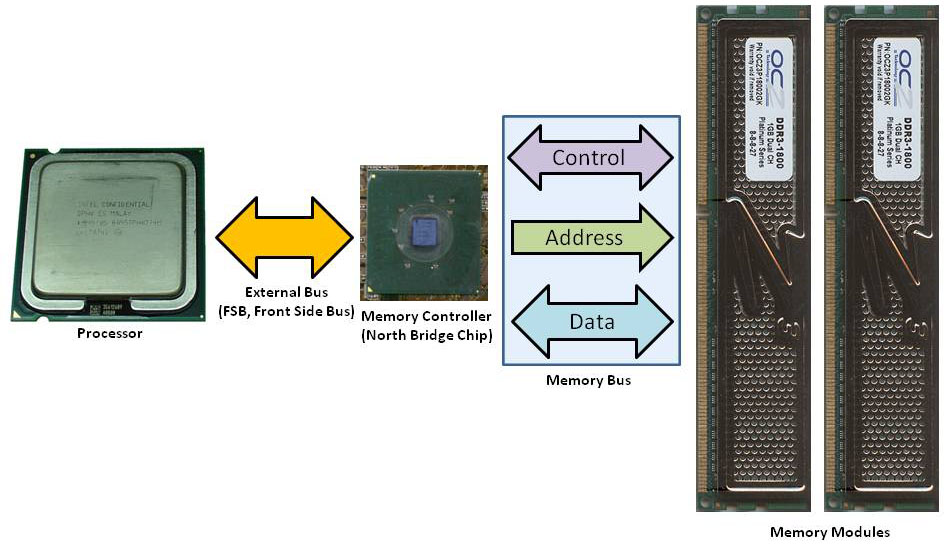

Before going further, let’s first explain how the RAM is traditionally connected to the system.

The RAM is controlled by a circuit called a memory controller. Currently, most processors have this component embedded, so the CPU has a dedicated memory bus connecting the processor to the RAM. On older CPUs, however, this circuit was located inside the motherboard chipset, in the north bridge chip. (This chip is also known as MCH or Memory Controller Hub.) In this case, the CPU doesn’t “talk” directly to the RAM; the CPU “talks” to the north bridge chip, and this chip “talks” to the memory. The first option provides better performance, since there is no “middleman” in the communications between the CPU and the memory. In Figures 1 and 2, we compare the two approaches.

The RAM is connected to the memory controller through a series of wires, collectively known as a “memory bus.” These wires are divided into three groups: data, address, and control. The wires from the data bus will carry data that is being read (transferred from the memory to the memory controller) or written (transferred from the memory controller to the memory, i.e., coming out of the CPU).

The wires from the address bus tell the memory modules exactly where (which address) that data must be retrieved or stored. The control wires send commands to the memory modules, telling them what kind of operation is being done – for example, if it is a write (store) or a read operation. Another important wire present on the control bus is the memory clock signal.

The memory speeds (clock rates), maximum capacity per memory module, total maximum capacity, and types (DDR, DDR2, DDR3, etc.) that a system can accept is defined by the memory controller. For example, if a given memory controller only supports DDR3 memories up to 1,333 MHz, you won’t be able to install DDR2 memories, and if you install DDR3 memories above 1,333 MHz (e.g., 1,866 MHz or 2,133 MHz modules), they will be accessed at 1,333 MHz.

(An exception to this rule is when the motherboard allows you to configure the RAM at a clock rate above the official maximum supported by the memory controller. For a real example, current Intel CPUs support memories up to 1,333 MHz, but several motherboards will allow you to configure clock rates up to 2,133 MHz.)

The discussion about clock rates is really important, because the clock rate defines the available bandwidth, which is our next subject.

Last update on 2026-05-30 at 05:22 / Affiliate links / Images from Amazon Product Advertising API

![Intel Core i7 6700K 4.00 GHz Unlocked Quad Core Skylake Desktop Processor, Socket LGA 1151 [BX80662I76700K]](https://m.media-amazon.com/images/I/41+cSKxJoZL._SL160_.jpg)